LLM distillation is becoming a key technique for building high-performing AI at lower cost. Meta used its Llama 4 Behemoth to train smaller models, while Google leveraged Gemini for Gemma. Key methods include learning from probability distributions, imitating outputs, and co-training models together. https://www.marktechpost.com/2026/05/11/understanding-llm-distillation-techniques/ #AIagent #AI #GenAI #AIResearch

Related

🤪 Halupedia: Encyclopedia that hallucinates articles on the fly https://github.com/BaderBC/halupedia#ai #hallucinations ...

🤪 Halupedia: Encyclopedia that hallucinates articles on the fly https://github.com/BaderBC/halupedia#ai #hallucinations #wikipedia

When you're too broke for human playtesters... https://youtu.be/OEMxpNJFzlc#gamedev #indiedev #godotengine #ai

When you're too broke for human playtesters... https://youtu.be/OEMxpNJFzlc#gamedev #indiedev #godotengine #ai

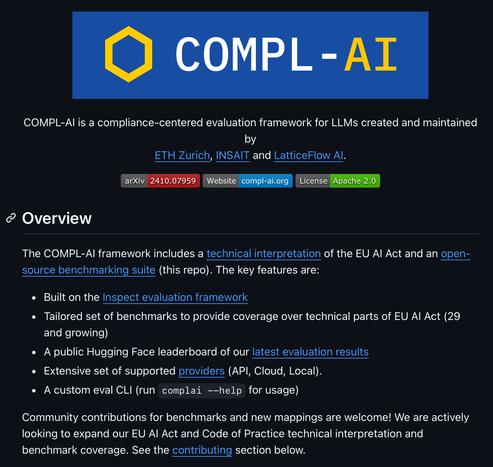

https://github.com/compl-ai/compl-ai #llm #compliance

https://github.com/compl-ai/compl-ai #llm #compliance