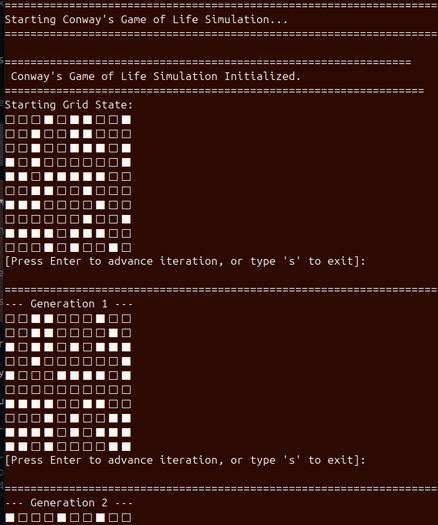

Most small language models are still unfit for reliable agentic use. Gemma4:e4b beat them all, running on 13GB of VRAM with a 128k token context. Not bad at all!#LLM #AgentAI

Most small language models are still unfit for reliable agentic use. Gemma4:e4b beat them all, running on 13GB of VRAM w...